Its old design pattern which is more relevant now. You can

have different parts of calculation run on different machines which can talk to

each other and hence run calculation faster.

Google have their own implementation which is similar to Cloudera implementation. Then Amazon has Hadoop Lite , houch DB or mongo DB have some implementation running inside. Each one is solving specific probs.

MapReduce is basically made up of MAP and REDUCE. Where MAP

is Stateless and independent and REDUCE for it we need to think of how it fits

into overall flow... so there is seperation. Let's think of sorting prob each

node needs to sort its data and fit it into Reducer.

Let's suppose a cluster of N nodes having data and running a

piece of code F(x) (like sum , avg etc) . Now there is no separation of data ex it's not the case one

node has Sept data other Oct node. not data is separated by Product type etc ,

data is evenly distributed. AND DATA is independent.

Each of the nodes will output pairs. can be date

and dollar amount(sales).

Seems Can have more than one reducer. All the key value

pairs come to this Reducer and gives you any key value pair ie sales on any

given day.

Can do similar stuff

in SQL , so whats the advtg here ? First this is not running on enterprise architecture think in terms of clustered architecture. And secondly Advtg here is scalability ... independent

portions don't have to wait for anyone to finish.

Example :- suppose 6 nodes and we have 2 reducers one for

1st quarter Jan - Mar and another for second march so each reducers gets data

from all of the nodes.

Map is like a Data Warehouse code which extracts , Transform

and loads.

It's not necessary to always write/need reduce , 6 out of 10

times you can pass by the reduce code.

Can also chain map reduce.

Icremental Map Reduce :- Couch DB does this once you ask it

a ques ( avg sale this quarter ) it keeps computing this for you. this makes it

different from google. Couch DB is interested in 1 to 100 DB server and Google

is interested in PeraByte plus info like how to scale the web. COuch DB is a doc based database where docs

are represented as JSON when intreacting with it , else stored as binary.

Suppose i want to find biggest sales. so sorting on each

node. sort is done according to key so you need to put the right thing in the

key. so basically ask yourself how do i want to query this thing later on ...

that defines the key.

you can do lot of diff things with Emit(amount,null) if date

is of june then emit if date is in last 30 days then emit else not.

Things like count , sum , min , max , avg , std dev etc are

written to the DB.

Ex :- we want to know the total no of sales of

certain amount. so basically in mapper we return k,v where k should be amount

.. in the mapper code we can have logic if greater than x then return k,v =>

then in reducer we can count the values HENCE we are retuning .

Ex :-

if we are counting the number of items per sale then we can return

and in reducer use count. A reducer will be called one

for a key each time

Time series aggregation

Key can be complex not necc single string or int or date.

emit ( [year,month,day,location] , dollarAmount )

then in reduce we can use _SUM ( again is applied to Value that is emitted )

u can have keys like [location,yr,month,day] from most significant to least.

this way we can STRUCTURE.

you can try and NOT to write your own reducer , most of the times you can use std reducer.

We can group by loc only or year only ... there is group_level its a number between 0 and length of the array.

[AZ,2010,6,29],s1

[AZ,2010,6,30],s2

if i say group level 3 THEN i get info for [AZ,2010,6]

Note output of Map & reduce is simple text hence keeping comm fast.

Also note all this happening in Real time you don't have a system THAT gets Data from one DB to another overnight.

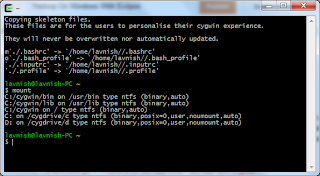

Parts of a Map Reduce Job

Job Conf

This represents a Job that will be completed via a Map Reduce paradigm

JobConf conf = new

JobConf(MapReduceIntro.class);

Now that you have a JobConfig object, conf, you need to set

the required parameters for the job. These include the input and output

directory locations, the format of the input and output, and the mapper and

reducer classes.

It is good practice to pass in a class that is contained in

the JAR file that has your map and reduce functions.This ensures that

the framework will make the JAR available to the map and reduce tasks run for

your job.

Mapper

Maps

are the individual tasks which transform input records into a intermediate

records. The transformed intermediate records need not be of the same type as

the input records. A given input pair may map to zero or many output pairs.

Configuring the Reduce Phase

To configure the reduce phase, the user must supply the

framework with five pieces of information:

• The number of reduce tasks; if zero, no reduce phase is

run

• The class supplying the reduce method

• The input key and value types for the reduce task; by

default, the same as the reduce output

• The output key and value types for the reduce task

• The output file type for the reduce task output